# If running on colab, uncomment the line below and run this cell:

#!pip in stall devitofrom devito import *Goals:¶

- Introduce users to some of the core Devito objects including grids (

Grid), functions (Function,TimeFunction), equations (Eq) and operators (Operator) - Illusrate how the DSL can be used to produce a range of finite difference (FD) stencils

- Execute a simple operator

The main objective of this tutorial is to demonstrate how Devito and its SymPy-powered symbolic API can be used to solve partial differential equations using the finite difference method with highly optimized stencils in a few lines of Python. We demonstrate how computational stencils can be derived directly from the equation in an automated fashion and how Devito can be used to generate and execute, at runtime, the desired numerical scheme in the form of optimized C code.

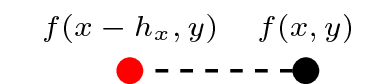

Defining the physical domain¶

Before we can begin creating finite-difference (FD) stencils we will need to give Devito a few details regarding the computational domain within which we wish to solve our problem. For this purpose we create a Grid object that stores the physical extent (the size) of our domain and knows how many points we want to use in each dimension to discretise our data.

grid = Grid(shape=(5, 6), extent=(1., 1.))

gridGrid[extent=(1.0, 1.0), shape=(5, 6), dimensions=(x, y)]Functions and data¶

To express our equation in symbolic form and discretise it using finite differences, Devito provides a set of Function types. A Function object:

- Behaves like a

sympy.Functionsymbol - Manages data associated with the symbol

To get more information on how to create and use a Function object, or any type provided by Devito, we can take a look at the documentation.

print(Function.__doc__)

Tensor symbol representing a discrete function in symbolic equations.

A Function carries multi-dimensional data and provides operations to create

finite-differences approximations.

A Function encapsulates space-varying data; for data that also varies in time,

use TimeFunction instead.

Parameters

----------

name : str

Name of the symbol.

grid : Grid, optional

Carries shape, dimensions, and dtype of the Function. When grid is not

provided, shape and dimensions must be given. For MPI execution, a

Grid is compulsory.

space_order : int or 3-tuple of ints, optional

Discretisation order for space derivatives. Defaults to 1. ``space_order`` also

impacts the number of points available around a generic point of interest. By

default, ``space_order`` points are available on both sides of a generic point of

interest, including those nearby the grid boundary. Sometimes, fewer points

suffice; in other scenarios, more points are necessary. In such cases, instead of

an integer, one can pass a 3-tuple ``(o, lp, rp)`` indicating the discretization

order (``o``) as well as the number of points on the left (``lp``) and right

(``rp``) sides of a generic point of interest.

shape : tuple of ints, optional

Shape of the domain region in grid points. Only necessary if ``grid`` isn't given.

dimensions : tuple of Dimension, optional

Dimensions associated with the object. Only necessary if ``grid`` isn't given.

dtype : data-type, optional

Any object that can be interpreted as a numpy data type. Defaults

to ``np.float32``.

staggered : Dimension or tuple of Dimension or Stagger, optional

Define how the Function is staggered.

initializer : callable or any object exposing the buffer interface, optional

Data initializer. If a callable is provided, data is allocated lazily.

allocator : MemoryAllocator, optional

Controller for memory allocation. To be used, for example, when one wants

to take advantage of the memory hierarchy in a NUMA architecture. Refer to

`default_allocator.__doc__` for more information.

padding : int or tuple of ints, optional

.. deprecated:: shouldn't be used; padding is now automatically inserted.

Allocate extra grid points to maximize data access alignment. When a tuple

of ints, one int per Dimension should be provided.

Examples

--------

Creation

>>> from devito import Grid, Function

>>> grid = Grid(shape=(4, 4))

>>> f = Function(name='f', grid=grid)

>>> f

f(x, y)

>>> g = Function(name='g', grid=grid, space_order=2)

>>> g

g(x, y)

First-order derivatives through centered finite-difference approximations

>>> f.dx

Derivative(f(x, y), x)

>>> f.dy

Derivative(f(x, y), y)

>>> g.dx

Derivative(g(x, y), x)

>>> (f + g).dx

Derivative(f(x, y) + g(x, y), x)

First-order derivatives through left/right finite-difference approximations

>>> f.dxl

Derivative(f(x, y), x)

Note that the fact that it's a left-derivative isn't captured in the representation.

However, upon derivative expansion, this becomes clear

>>> f.dxl.evaluate

f(x, y)/h_x - f(x - h_x, y)/h_x

>>> f.dxr

Derivative(f(x, y), x)

Second-order derivative through centered finite-difference approximation

>>> g.dx2

Derivative(g(x, y), (x, 2))

Notes

-----

The parameters must always be given as keyword arguments, since SymPy

uses ``*args`` to (re-)create the dimension arguments of the symbolic object.

Ok, let's create a function and look at the data Devito has associated with it. Please note that it is important to use explicit keywords, such as name or grid when creating Function objects.

f = Function(name='f', grid=grid)

ff.dataData([[0., 0., 0., 0., 0., 0.],

[0., 0., 0., 0., 0., 0.],

[0., 0., 0., 0., 0., 0.],

[0., 0., 0., 0., 0., 0.],

[0., 0., 0., 0., 0., 0.]], dtype=float32)By default, Devito Function objects use the spatial dimensions (x, y) for 2D grids and (x, y, z) for 3D grids. To solve a PDE over several timesteps a time dimension is also required by our symbolic function. For this Devito provides an additional function type, the TimeFunction, which incorporates the correct dimension along with some other intricacies needed to create a time stepping scheme.

g = TimeFunction(name='g', grid=grid)

gSince the default time order of a TimeFunction is 1, the shape of f is (2, 5, 6), i.e. Devito has allocated two buffers to represent g(t, x, y) and g(t + dt, x, y):

g.shape(2, 5, 6)A note on the size of the time dimension: By default, the time dimension will be allocated with the minimum number of elements required by the time-stepping scheme employed. The default time_order is 1 for which time stepping schemes will be of the form

that is, we need to store the field at the current and next time step and hence the size of 2. The c code will then be generated with modulo time-stepping to account for this (we'll see an example below). Therefore, given a time step we would have that

| Location stored | |

|---|---|

g.data[0] | |

g.data[1] | |

g.data[0] | |

g.data[1] | |

g.data[0] |

and so forth.

To solve a PDE that is second order in time directly, such as we'll do later when solving the second order wave-equation, we'll need to time-stepping scheme with a minimum time order of 2. If we define, e.g.,

g = TimeFunction(name='g', grid=grid, time_order=2)

then the time dimension of g would now have a size of 3 since the time-stepping scheme would look like

We would then have data stored as follows

| Location stored | |

|---|---|

g.data[0] | |

g.data[1] | |

g.data[2] | |

g.data[0] | |

g.data[1] | |

g.data[2] | |

g.data[0] |

If we with to save the value of a function at each time-step (and avoid over-writing the function at old time steps as is the default mode of operation) we can make use of the save parameter. That is, if we wish to store the function at 1000 different points in time (i.e. set the function to have a time-dimension size of 1000) we can define

g = TimeFunction(name='g', grid=grid, save=1000)

and in this case the c code would then be generated without modulo time-stepping.

Above, we've just scratched the surface of some basic configurations available. In practice, we may wish to subsample a field in time, implement a checkpoint method or so on. For further discussion, please see further tutorials and information located here, here and here.

Forming finite difference schemes¶

Let us start by considering the simple example of 2D linear convection. But first, lets review some basics of finite differences.

Recall that the Taylor series of in the spatial dimension takes the form

We can re-arrange the above expansion in the form

Thus, provided is small we can say

which will have an associated error

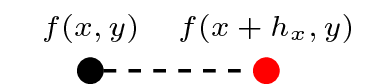

This is the well known forward difference approximation (or the right derivative) and is how spatial derivatives are approximated in 'space order' 1 finite difference schemes.

We can also write the following Taylor expansion

This leads to the backward difference (or left derivative) approximation:

In Devito, these derivatives can be represented via the following symbolic notation:

f.dxr # Forward difference/right derivativef.dxl # Backward difference/left derivativeAnd to see the actual stencils they represent, we enduce the evaluate method:

f.dxr.evaluatef.dxl.evaluatewhich we see correspond with the expressions derived from the Taylor series above. Note that we also have access to the expression f.dx which, in this particular instance, will produce a stencil equivalent to f.dxr. (We'll come back to this shortly when considering higher order stencils).

f.dx.evaluateThe functions we created above all act as sympy.Function objects, which means that we can form symbolic derivative expressions from them. Devito provides a set of shorthand expressions (implemented as Python properties) that allow us to generate finite differences in symbolic form. For example, the property f.dx denotes - only that Devito has already discretised it with a finite difference expression.

| Derivative | Shorthand | Discretised | Stencil |

|---|---|---|---|

| (right)) | f.dxr |

| |

| (left)) | f.dxl |

|

We can form stencils for approximating the derivatives in any other spatial or time dimension in a similar manner. Let us introduce the function . We have

where is some small increment in time. This then allows us to create 'time-stepping schemes'. For example, consider the partial differential equation describing 2D linear advection

discretizing only the temporal term for the time being we have

which we can re-arrange as

which is one of the most basic explicit time stepping schemes. Now if we approximated our spatial derivative with a backward difference approximation our scheme will become

(Note that here we have assumed an equal grid spacing ). Then, provided we know the status of our function at we can use the above scheme to compute the evolution of forward in time. Lets now implement this scheme in Devito.

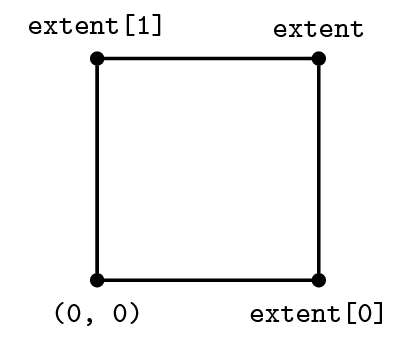

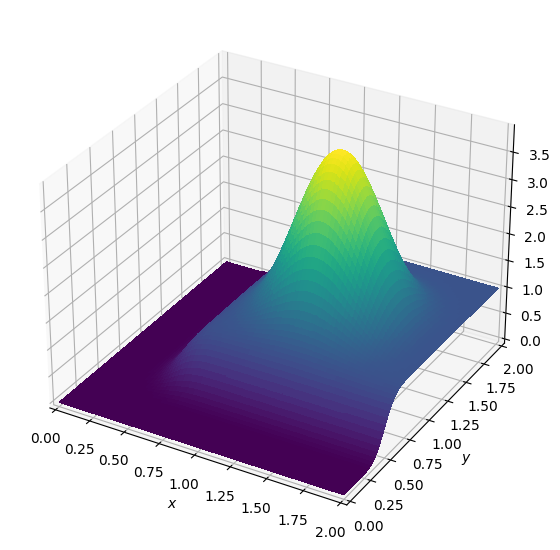

We first define our grid, the function and provide an initial condition:

from examples.cfd import init_smooth, plot_field

nt = 100 # Number of timesteps

dt = 0.2 * 2. / 80 # Timestep size (sigma=0.2)

c = 1 # Value for c

# Then we create a grid and our function

grid = Grid(shape=(81, 81), extent=(2., 2.))

u = TimeFunction(name='u', grid=grid)

# We can now set the initial condition and plot it

init_smooth(field=u.data[0], dx=grid.spacing[0], dy=grid.spacing[1])

init_smooth(field=u.data[1], dx=grid.spacing[0], dy=grid.spacing[1])

plot_field(u.data[0])

Next we formulate our equation and finite difference scheme:

eq = Eq(u.dt + c * u.dxl + c * u.dyl, 0)

eqstencil = solve(eq, u.forward)

update = Eq(u.forward, stencil)

updateWe can check our update scheme is the same as our pen and paper scheme by evaluateing it:

update.evaluateFinally we need to create and execute a Devito operator. This is where the magic happens: Devito takes our symbolic specification of the boundary value problem and through a number of compilation passes produces c code optimised for the target platform. We'll talk more about Operator objects in the following section, for now we'll just utilize it

op = Operator(update)

op(time=nt+1, dt=dt)

plot_field(u.data[0])Operator `Kernel` ran in 0.01 s

We can view the generated c code via

print(op.ccode)#define _POSIX_C_SOURCE 200809L

#define START_TIMER(S) struct timeval start_ ## S , end_ ## S ; gettimeofday(&start_ ## S , NULL);

#define STOP_TIMER(S,T) gettimeofday(&end_ ## S, NULL); T->S += (double)(end_ ## S .tv_sec-start_ ## S.tv_sec)+(double)(end_ ## S .tv_usec-start_ ## S .tv_usec)/1000000;

#include "stdlib.h"

#include "math.h"

#include "sys/time.h"

#include "xmmintrin.h"

#include "pmmintrin.h"

struct dataobj

{

void *restrict data;

int * size;

int * npsize;

int * dsize;

int * hsize;

int * hofs;

int * oofs;

} ;

struct profiler

{

double section0;

} ;

int Kernel(const float dt, const float h_x, const float h_y, struct dataobj *restrict u_vec, const int time_M, const int time_m, const int x_M, const int x_m, const int y_M, const int y_m, struct profiler * timers)

{

float (*restrict u)[u_vec->size[1]][u_vec->size[2]] __attribute__ ((aligned (64))) = (float (*)[u_vec->size[1]][u_vec->size[2]]) u_vec->data;

/* Flush denormal numbers to zero in hardware */

_MM_SET_DENORMALS_ZERO_MODE(_MM_DENORMALS_ZERO_ON);

_MM_SET_FLUSH_ZERO_MODE(_MM_FLUSH_ZERO_ON);

float r0 = 1.0F/h_x;

float r1 = 1.0F/h_y;

float r2 = 1.0F/dt;

for (int time = time_m, t0 = (time)%(2), t1 = (time + 1)%(2); time <= time_M; time += 1, t0 = (time)%(2), t1 = (time + 1)%(2))

{

/* Begin section0 */

START_TIMER(section0)

for (int x = x_m; x <= x_M; x += 1)

{

#pragma omp simd aligned(u:32)

for (int y = y_m; y <= y_M; y += 1)

{

u[t1][x + 1][y + 1] = dt*(-r0*(-u[t0][x][y + 1]) - r0*u[t0][x + 1][y + 1] - r1*(-u[t0][x + 1][y]) - r1*u[t0][x + 1][y + 1] + r2*u[t0][x + 1][y + 1]);

}

}

STOP_TIMER(section0,timers)

/* End section0 */

}

return 0;

}

There also exist a range of other convenient shortcuts. For example, the forward and backward stencil points, g(t+dt, x, y) and g(t-dt, x, y).

g.forwardg.backwardAnd of course, there's nothing to stop us taking derivatives on these objects:

g.forward.dtg.forward.dySecond derivatives and high-order stencils¶

The logic for constructing derivatives and finite difference schemes introduced above carries over higher order derivatives and higher order approximations in a straightforward manner. For example, second derivative with respect to of order two,

can be implemented via f.dx2. Note however, if we attempt to compute this using the f defined above we will see an error:

# f.dx2 # Uncomment to see the errorNote the AttributeError: f object has no attribute 'dx2'. Let us review the function documentation again:

print(Function.__doc__)

Tensor symbol representing a discrete function in symbolic equations.

A Function carries multi-dimensional data and provides operations to create

finite-differences approximations.

A Function encapsulates space-varying data; for data that also varies in time,

use TimeFunction instead.

Parameters

----------

name : str

Name of the symbol.

grid : Grid, optional

Carries shape, dimensions, and dtype of the Function. When grid is not

provided, shape and dimensions must be given. For MPI execution, a

Grid is compulsory.

space_order : int or 3-tuple of ints, optional

Discretisation order for space derivatives. Defaults to 1. ``space_order`` also

impacts the number of points available around a generic point of interest. By

default, ``space_order`` points are available on both sides of a generic point of

interest, including those nearby the grid boundary. Sometimes, fewer points

suffice; in other scenarios, more points are necessary. In such cases, instead of

an integer, one can pass a 3-tuple ``(o, lp, rp)`` indicating the discretization

order (``o``) as well as the number of points on the left (``lp``) and right

(``rp``) sides of a generic point of interest.

shape : tuple of ints, optional

Shape of the domain region in grid points. Only necessary if ``grid`` isn't given.

dimensions : tuple of Dimension, optional

Dimensions associated with the object. Only necessary if ``grid`` isn't given.

dtype : data-type, optional

Any object that can be interpreted as a numpy data type. Defaults

to ``np.float32``.

staggered : Dimension or tuple of Dimension or Stagger, optional

Define how the Function is staggered.

initializer : callable or any object exposing the buffer interface, optional

Data initializer. If a callable is provided, data is allocated lazily.

allocator : MemoryAllocator, optional

Controller for memory allocation. To be used, for example, when one wants

to take advantage of the memory hierarchy in a NUMA architecture. Refer to

`default_allocator.__doc__` for more information.

padding : int or tuple of ints, optional

.. deprecated:: shouldn't be used; padding is now automatically inserted.

Allocate extra grid points to maximize data access alignment. When a tuple

of ints, one int per Dimension should be provided.

Examples

--------

Creation

>>> from devito import Grid, Function

>>> grid = Grid(shape=(4, 4))

>>> f = Function(name='f', grid=grid)

>>> f

f(x, y)

>>> g = Function(name='g', grid=grid, space_order=2)

>>> g

g(x, y)

First-order derivatives through centered finite-difference approximations

>>> f.dx

Derivative(f(x, y), x)

>>> f.dy

Derivative(f(x, y), y)

>>> g.dx

Derivative(g(x, y), x)

>>> (f + g).dx

Derivative(f(x, y) + g(x, y), x)

First-order derivatives through left/right finite-difference approximations

>>> f.dxl

Derivative(f(x, y), x)

Note that the fact that it's a left-derivative isn't captured in the representation.

However, upon derivative expansion, this becomes clear

>>> f.dxl.evaluate

f(x, y)/h_x - f(x - h_x, y)/h_x

>>> f.dxr

Derivative(f(x, y), x)

Second-order derivative through centered finite-difference approximation

>>> g.dx2

Derivative(g(x, y), (x, 2))

Notes

-----

The parameters must always be given as keyword arguments, since SymPy

uses ``*args`` to (re-)create the dimension arguments of the symbolic object.

Note the optional argument space_order. We didn't previously pass this argument and hence it took the default value of 1. That is, defining it in this way, it can only support spatial derivatives of order one (or schemes of oder one). If we wish to construct higher order derivatives or schemes, we can do so as follows:

Pass the desired space_order when constructing a function. For example

f = Function(name='f', grid=grid, space_order=2)f.dx2.evaluateAnd if, for example, we wish to produce fourth order accurate first derivatives

we can do so in the following manner:

f = Function(name='f', grid=grid, space_order=4)

f.dx.evaluateBy default, Devito will produce schemes with the order space_order used when defining a Function.

We can override this by explicitly passing the space order of the desired scheme when creating a symbolic expression for a derivative. For example:

f.dx(fd_order=2).evaluateNote that fd_order cannot exceed the space_order with which a Function was defined.

Time derivatives follow a similar principle, we simply instead pass the desired time_order to a function, e.g.,

g = TimeFunction(name='g', grid=grid, time_order=2, space_order=4)g.dt2.evaluate+g.dy.evaluateTo implement the diffusion or wave equations, we must take the Laplacian , which is the sum of the second derivatives in all spatial dimensions. For this, Devito also provides a shorthand expression, which means we do not have to hard-code the problem dimension (2D or 3D) in the code. To change the problem dimension we can create another Grid object and use this to re-define our Function's:

grid_3d = Grid(shape=(5, 6, 7), extent=(1., 1., 1.))

u = TimeFunction(name='u', grid=grid_3d, space_order=2)

uWe can re-define our function u with a different space_order argument to change the discretisation order of the stencil expression created. For example, we can derive an expression of the 12th-order Laplacian :

u = TimeFunction(name='u', grid=grid_3d, space_order=12)

u.laplaceThe same expression could also have been generated explicitly via:

u.dx2 + u.dy2 + u.dz2Derivatives of composite expressions¶

Derivatives of any arbitrary expression can easily be generated:

u = TimeFunction(name='u', grid=grid, space_order=2)

v = TimeFunction(name='v', grid=grid, space_order=2, time_order=2)v.dt2 + u.laplace(v.dt2 + u.laplace).dx2Which can, depending on the chosen discretisation, lead to fairly complex stencils:

(v.dt2 + u.laplace).dx2.evaluate